Conway's Law Isn't the Problem. Your Ownership Model Is.

How unclear capability ownership turned one fulfillment pipeline into two competing systems.

Written for engineering leaders and architects who've seen duplicate capabilities emerge across teams.

Two teams built the same system. Neither knew the other existed. Both shipped on time. Both got promoted. Both created a reconciliation layer that still wakes someone up at 3am.

I've worked at payments companies, insurance companies, and warranty companies. I see this pattern when companies get large. Two teams solve the same problem in parallel because the org chart told them they were working on different things. Nobody discovers the overlap until something forces the codebases together. Then you spend three quarters untangling it.

But to protect the innocent (and my LinkedIn connections), let me tell you about something that definitely never happened at any real company: the Great Taco Fulfillment Incident.

A company (let's call them TacoCorp) had a taco order fulfillment system. An order comes in. It gets validated, prepared, and routed to a delivery channel: pickup counter, delivery driver, or the premium option (a mariachi band hand-delivers it to your desk). Different channels, same core workflow: validate order, confirm ingredients, notify the customer, execute delivery.

Simple enough. One pipeline. One team. Worked fine.

The Business Case That Started It All

But only 65% of orders were fully automated. The rest required manual processing because TacoCorp's downstream operations system (let's call it TacoForce) had replicated data with poor technology choices that blocked further automation. Staff would come in the next morning, open TacoForce, and process orders one by one. Suppliers didn't get their tacos until the following business day.

Then a VP of Operations ran the numbers. With 15 manual operators at ~$65K each processing roughly 400 orders per day, each automation percentage point displaced about $250K in annual labor costs. Getting from 65% to 90% was a $6.25M annual opportunity. Same-day fulfillment. Lower error rates. Suppliers paid faster. The business case was undeniable.

So the VP's team built a new pipeline. Event-driven, same-day delivery, direct integrations bypassing TacoForce entirely. New delivery channels: bulk partner files, automated phone ordering, direct processor integrations. Real-time execution instead of next-morning manual batches.

The business case was right. The implementation path was where it broke down.

They rebuilt validate, prepare, and notify from scratch instead of extending the existing executor. The original pipeline (Express Kitchen) already had this architecture. It was extensible. It had the channel routing. But nobody extended it.

Two different teams built the same capability.

Not similar. Not adjacent. The same thing.

Both subscribed to the same trigger. Both reconstructed the same workflow. Both routed to overlapping delivery channels, just through slightly different paths. Customers were getting double-delivered tacos. Which, to be fair, is the only good kind of duplicate.

And this wasn't accidental.

Conway's Law (The Part People Skip)

Melvin Conway, 1967

What you don't hear is what happens when:

- Ownership is unclear

- Boundaries are blurred

- Teams are optimized for delivery, not domains

That's when Conway's Law stops being descriptive and starts becoming expensive.

The Two Failure Modes

1. Blended Bounded Contexts

Capabilities don't belong to anyone. Pieces of the same domain (like, say, taco fulfillment) exist across multiple platforms. Teams unknowingly co-own behavior. Logic leaks across boundaries.

"Where does this actually live?"

2. Duplicate Capabilities

Two teams solve the same problem independently. Different leaders, different priorities, slightly different interpretations of what "fulfilled" means. Same events, same workflows, same outcomes.

At first, it looks like speed.

What Should Have Happened

One topic. One executor. Different delivery channels routed by type. The common workflow (validate, prepare, notify) runs once. The only divergence is the final delivery step.

flowchart TD

EVT["OrderCreated"] --> TOPIC["Service Bus Topic"]

TOPIC --> SUB["Subscription"]

SUB --> EXEC["Fulfillment Executor"]

EXEC --> V["Validate"]

V --> P["Prepare"]

P --> N["Notify"]

N --> CH{"Route by Type"}

CH --> C1["Counter Pickup"]

CH --> C2["Driver Delivery"]

CH --> C3["Bulk Partners"]

CH --> C4["Phone / IVR"]

CH --> C5["Direct Processor"]

CH --> C6["Premium"]

style EVT fill:#1a1040,stroke:#a78bfa,color:#f5f5fa

style TOPIC fill:#1e293b,stroke:#a78bfa,color:#f5f5fa

style SUB fill:#1e293b,stroke:#a78bfa,color:#f5f5fa

style EXEC fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa

style V fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa

style P fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa

style N fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa

style CH fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C1 fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C2 fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C3 fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C4 fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C5 fill:#0d1520,stroke:#34d399,color:#f5f5fa

style C6 fill:#0d1520,stroke:#34d399,color:#f5f5fa This assumes the existing executor was designed for extensibility: a clear channel interface, test coverage, and a team willing to accept contributions. When those preconditions don't exist, the first investment should be making the system extensible, not building around it.

What Actually Happened

Same topic. Two subscriptions. Two executors rebuilding the same workflow. One reconciliation layer that shouldn't exist.

flowchart TD EVT["OrderCreated"] --> TOPIC["Service Bus Topic"] TOPIC --> SUBA["Subscription A"] TOPIC --> SUBB["Subscription B"] SUBA --> EA["Executor A"] SUBB --> EB["Executor B"] EA --> VA["Validate"] EB --> VB["Validate"] VA --> PA["Prepare"] VB --> PB["Prepare"] PA --> NA["Notify"] PB --> NB["Notify"] NA --> CA["Pickup + Delivery"] NB --> CB["Premium"] CA --> SYNC["Reconciliation Layer"] CB --> SYNC style EVT fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style TOPIC fill:#1e293b,stroke:#a78bfa,color:#f5f5fa style SUBA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style SUBB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style EA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style EB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style VA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style PA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style NA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style CA fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style VB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style PB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style NB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style CB fill:#2d1010,stroke:#ef4444,color:#f5f5fa style SYNC fill:#1a1000,stroke:#fbbf24,color:#fbbf24

Blue = original executor (Team Alpha). Red = duplicate executor (Team Beta). Both run Validate → Prepare → Notify, the same workflow, rebuilt from scratch. Yellow = the reconciliation layer no one planned for.

The Cost of "Speed"

What looked like acceleration was actually fragmentation.

The "expected" estimate is based on comparable extensions to the existing pipeline, not a formal scope analysis. Retroactive estimates are inherently optimistic, but even doubling it, the duplicate path was still more expensive.

The Fungible Team Trap

Organizations often try to "move teams where needed," where any team can build any capability.

Why This Isn't Just a Microservices Problem

It's easy to blame architecture style. But the relationship is more causal than correlational.

Microservices actively encourage independent deployment and independent ownership. Combined with unclear domain boundaries, that's a direct enabler of duplicate capabilities. The architecture style didn't just "make it easier." It created the conditions where building a second system felt like the natural, fast, correct thing to do.

What happened

A new service was built alongside the existing one, enabled by microservices' low coupling.

What should have happened

A new processor was added to the existing pipeline, but that required cross-team coordination the architecture didn't demand.

The system was already extensible. It didn't need to be replaced. It needed to be extended. But microservices made the duplicate path frictionless while the extension path required navigating another team's roadmap.

When Duplication Is the Right Call

Intellectual honesty demands this section. There are cases where building independently is correct:

- The existing system is poorly designed. No clear extension points. No processor interface. Extending it would mean rewriting it, and you don't own it.

- The owning team is unresponsive. You've asked. They said "not on our roadmap." Your deadline is real. Waiting 6 months isn't an option.

- The domain has genuinely diverged. What looked like the same capability actually has different invariants, different SLAs, different correctness requirements. These are separate bounded contexts now.

- The technology stack is a mismatch. The existing system is built in a language or framework your team can't maintain.

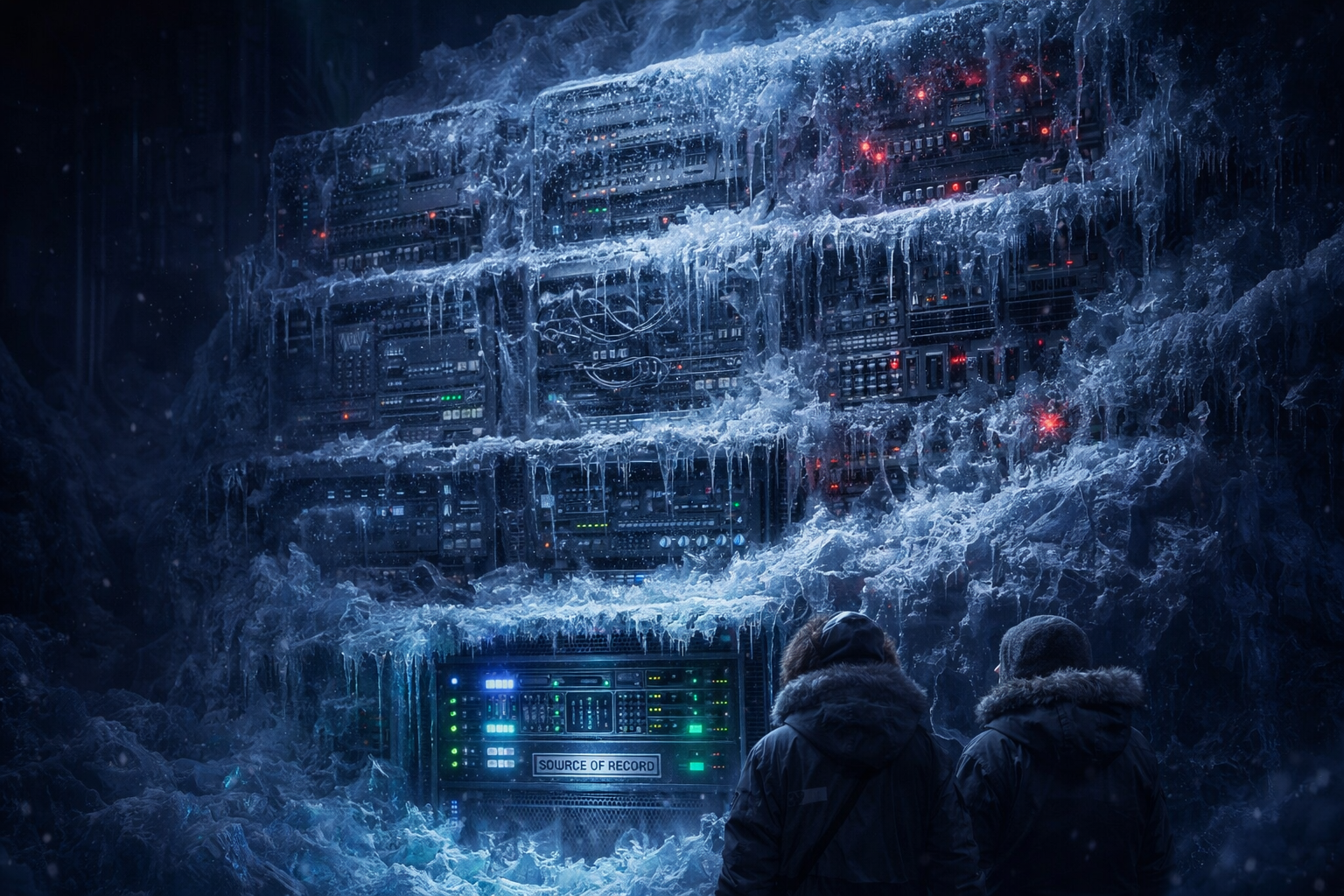

The Hidden Complexity: Data Replication

flowchart TD SOR["Source of Record"] --> REP["Replication Layer"] REP --> DS["Downstream System"] DS --> LOGIC["Downstream Logic"] LOGIC --> DEP["Hidden Dependencies"] DEP --> BLOCK["Blocks Upstream Changes"] style SOR fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style REP fill:#1a1040,stroke:#6e6e8a,color:#a8a8c0 style DS fill:#2d1010,stroke:#ef4444,color:#f5f5fa style LOGIC fill:#2d1010,stroke:#ef4444,color:#f5f5fa style DEP fill:#2d1010,stroke:#ef4444,color:#f5f5fa style BLOCK fill:#2d1010,stroke:#ef4444,color:#f5f5fa

Here's the cascade that makes data replication dangerous:

1. Data is replicated. Teams copy data downstream for performance or convenience. Seems harmless.

2. Teams build on replicated data. New features depend on the copy, not the source. The copy is "good enough."

3. Copy becomes "pseudo-source of truth." Adjacent teams treat the downstream system as authoritative. The original feels stale.

4. Original system becomes hard to change. Upstream modifications risk breaking downstream consumers you didn't know existed. You're frozen.

The "Helpful Team" Anti-Pattern

This doesn't happen because teams are careless. It happens because they're trying to help, and given the organizational incentives, the duplicate system was a predictable outcome.

Filling Gaps

Teams see unmet needs and step in to solve them, fast.

Moving Faster

Building your own is quicker than waiting for another team's roadmap.

Getting Rewarded

Visible output gets recognized. System coherence doesn't.

The failure wasn't in the teams; it was in the incentive structure. Until those decisions become constraints, the teams were acting rationally.

Shadow IT Isn't Always Rogue

This is uncomfortable but true. Shadow systems aren't always rebellion. They're often leadership-aligned (built because a leader asked for it), roadmap-driven (prioritized by a product team with legitimate goals), and incentivized (teams optimize for visibility, not system coherence).

The mechanism is specific and repeatable. A VP identifies a gap. Say the existing fulfillment pipeline doesn't support a new delivery channel they need by Q3. The owning team says "that's on our Q1 next year roadmap." The VP's team has engineers and a deadline. They don't go rogue; they go to their sprint planning, create a Jira epic, and start building. Their leadership reviews it. Their product manager prioritizes it. Their architects design it. It passes every internal gate because, within that team's bounded view, it is a new system. The duplicate only becomes visible when both systems are in production and a customer gets two confirmation emails.

This is fundamentally different from a rogue developer spinning up an unauthorized AWS account. Leadership-sanctioned shadow IT has budget, headcount, and roadmap space. It looks like good execution from the inside. The problem isn't governance failure at the team level; it's the absence of a cross-team capability registry that would surface the overlap before the first line of code is written. In the TacoCorp scenario, Team Beta's pipeline passed every review gate their VP set up. The gate that was missing was the one that asked: "Does this capability already have an owner?"

When the Owning Team Says No

This is the hardest real-world scenario and the one most articles on this topic ignore. The review gate says "this is a duplicate," but the owning team says "that's not on our roadmap for 6 months." Now what?

Why It Didn't Merge Sooner

Ironically: the "faster" path took longer.

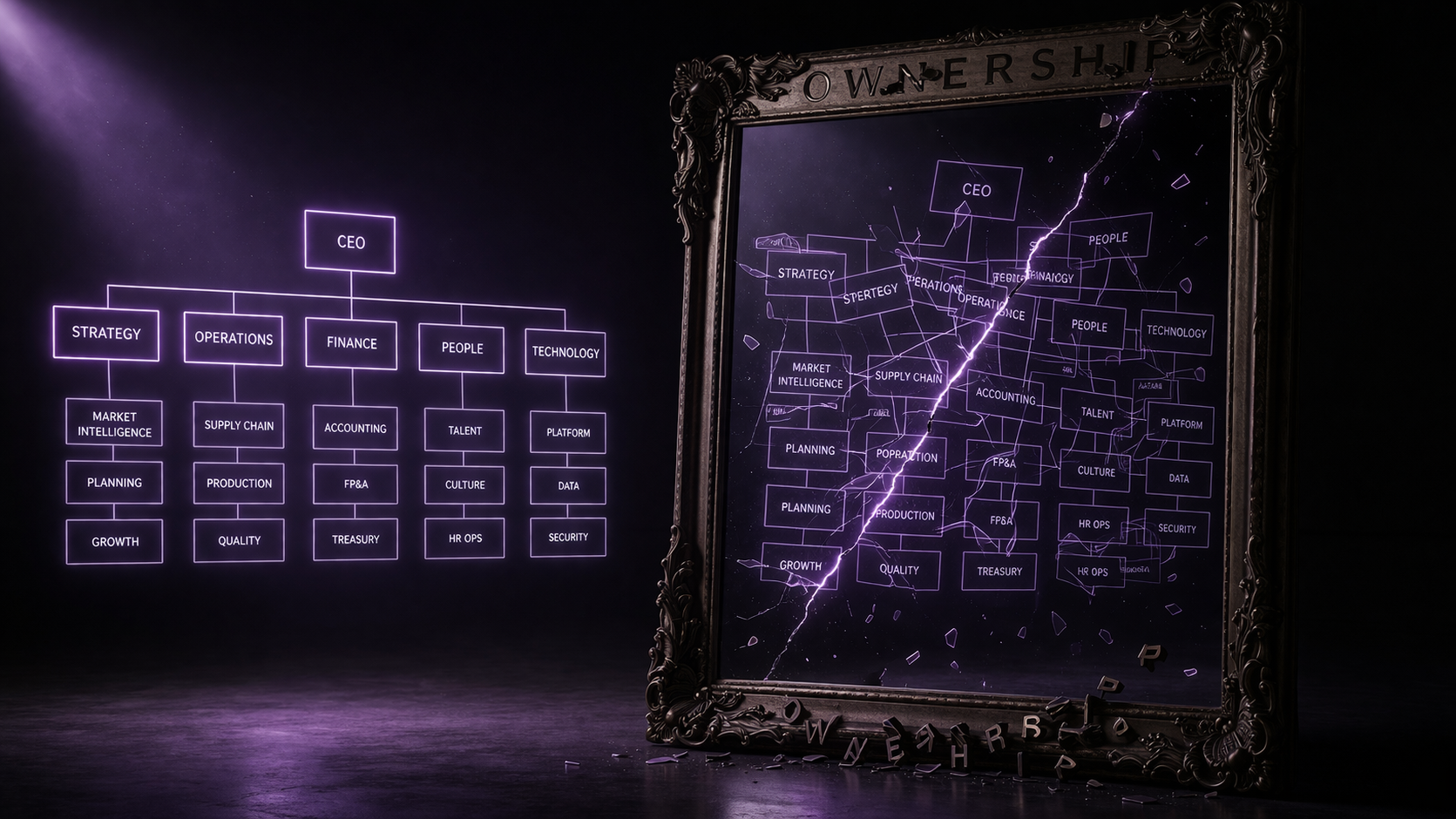

The Org-Architecture Mirror

Two VPs, two teams, two systems. The architecture mirrored the org chart perfectly.

flowchart TB subgraph ORG["Organization"] direction TB VP1["VP, Fulfillment"] --> T1["Team Alpha"] VP2["VP, Platform"] --> T2["Team Beta"] end subgraph SYS["System Architecture"] direction TB SB["Shared Service Bus"] --> S1["Fulfillment Pipeline A"] SB --> S2["Fulfillment Pipeline B"] end T1 -. "builds" .-> S1 T2 -. "builds" .-> S2 style ORG fill:#0f0f1a,stroke:#a78bfa,color:#f5f5fa style SYS fill:#0f0f1a,stroke:#ef4444,color:#f5f5fa style VP1 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style VP2 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style T1 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style T2 fill:#2d1010,stroke:#ef4444,color:#f5f5fa style S1 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style S2 fill:#2d1010,stroke:#ef4444,color:#f5f5fa style SB fill:#1a1000,stroke:#fbbf24,color:#fbbf24

The org structure dictated the system boundaries. Not the other way around.

Conway's Law: Your Options

Ignore

Fragmentation. Duplicate systems. Escalating maintenance costs.

Accept

Org-aligned architecture. Predictable but potentially suboptimal boundaries.

Invert

Architecture-driven org. The Inverse Conway Maneuver: design the org to match the system you want.

What "Invert" Actually Looks Like

Most articles stop at "invert Conway's Law." Here's what that means in practice:

Each option has tradeoffs a VP could evaluate against their org's constraints. The point is: these are concrete structural changes, not platitudes about "aligning teams."

The Interaction Model

The prescription "extend, don't duplicate" begs the question: how do teams contribute to systems they don't own?

Matthew Skelton and Manuel Pais' Team Topologies provides the framework. Three interaction modes determine whether cross-team extension is feasible:

Collaboration

Two teams work together closely for a defined period. High bandwidth, high cost. Good for discovery. Bad as a permanent state.

X-as-a-Service

One team provides a capability with a clear interface. Other teams consume it. Low coupling. Requires the owning team to maintain the interface as a product.

Facilitating

A team helps another team build a capability, then steps back. Temporary by design. Useful for bridging skill gaps.

The Capability Map They Never Drew

Here's what a DDD practitioner would have drawn before anyone wrote a line of code. This is TacoCorp's taco fulfillment domain decomposed into capabilities:

flowchart TD L1["Order Management"] L2["Taco Fulfillment"] L3A["Order Validation"] L3B["Order Preparation"] L3C["Delivery Channel Mgmt"] L3D["Fulfillment Execution"] L3E["Transaction Tracking"] CH1["Counter Pickup"] CH2["Driver Delivery"] CH3["Bulk Partner Files"] CH4["Phone Ordering / IVR"] CH5["Direct Processor Integration"] CH6["Premium / Mariachi"] L1 --> L2 L2 --> L3A L2 --> L3B L2 --> L3C L2 --> L3D L2 --> L3E L3C --> CH1 L3C --> CH2 L3C --> CH3 L3C --> CH4 L3C --> CH5 L3C --> CH6 style L1 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style L2 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style L3A fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style L3B fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style L3C fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style L3D fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style L3E fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style CH1 fill:#0d1520,stroke:#34d399,color:#f5f5fa style CH2 fill:#0d1520,stroke:#34d399,color:#f5f5fa style CH3 fill:#0d1520,stroke:#34d399,color:#f5f5fa style CH4 fill:#0d1520,stroke:#34d399,color:#f5f5fa style CH5 fill:#0d1520,stroke:#34d399,color:#f5f5fa style CH6 fill:#0d1520,stroke:#34d399,color:#f5f5fa

Purple = domain. Blue = Level 3 capabilities (single owner each). Green = delivery channels (extensions to Delivery Channel Mgmt). Transaction Tracking (confirmation events from delivery channels) is part of the domain but was not involved in the duplication; both teams relied on the existing tracking infrastructure.

A capability in this context is a domain operation at the level where a single team can own the full lifecycle: the interface, the implementation, the on-call, and the extension path. Too coarse ("Order Management") and you can't assign meaningful ownership. Too fine ("validate-order-step-3") and you create bureaucratic overhead. Level 3 is the sweet spot: specific enough to prevent duplication, general enough to be stable across quarters.

If this map had existed, the conversation would have been obvious:

But nobody drew this map. Team Alpha owned "Express Kitchen" (a product name). Team Beta owned "TacoForce Operations" (another product name). Neither team owned the capability. They owned products, and products, unlike capabilities, don't have to be unique.

The Context Map: What DDD Would Have Shown

A DDD context map doesn't show what's inside a bounded context; it shows the relationships between them. Here's what TacoCorp's contexts actually were, and what they should have been:

flowchart LR subgraph ACTUAL["What Happened: Separate Ways"] EK1["Express Kitchen

(Team Alpha)"] TFO1["TacoForce Ops

(Team Beta)"] EK1 -.- |"no relationship"| TFO1 end subgraph CORRECT["What Should Have Happened: Customer / Supplier"] EK2["Express Kitchen

(Supplier)"] TFO2["TacoForce Ops

(Customer)"] EK2 --> |"provides channel

extension interface"| TFO2 end style ACTUAL fill:#0f0f1a,stroke:#ef4444,color:#f5f5fa style CORRECT fill:#0f0f1a,stroke:#10b981,color:#f5f5fa style EK1 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style TFO1 fill:#2d1010,stroke:#ef4444,color:#f5f5fa style EK2 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style TFO2 fill:#0d1520,stroke:#34d399,color:#f5f5fa

Left: the actual relationship: Separate Ways (both teams built independently, no integration). Right: the correct relationship, Customer/Supplier (Express Kitchen provides the extension interface, TacoForce Ops consumes it to add new delivery channels).

What I'd Do Differently

Every domain capability gets a single owning team before the first line of code. A "capability" is a Level 3 domain operation, specific enough to prevent duplication, stable enough to survive a reorg. Maintained in a registry (spreadsheet is fine), reviewed quarterly. The registry answers: "who owns order fulfillment?" And the answer can't be "both teams."

A lightweight review gate (similar to the Catalog Gate) that asks: "Does this capability already exist? Who owns it? Why can't we extend it?" The gate needs teeth: a named architect with authority to block.

Default posture: extend the existing system. But only when it's designed for extensibility. If it isn't, the first investment should be making it extensible, not building around it.

Before starting cross-team work: is this Collaboration, X-as-a-Service, or Facilitating? Document it. Make the owning team's SLA for contributions part of their team charter.

Add metrics for: cross-team contributions accepted, capabilities reused vs rebuilt, and time-to-extend for consuming teams. What gets measured gets maintained.

Score Your Organization

How vulnerable is your org to the ownership trap? Answer honestly.

The Ownership Toolkit

If your org scored poorly above, here's how to start fixing it. Pick your starting point based on what exists today:

No governance exists

Some governance, no enforcement

Governance exists, need to scale

The Duplication Cost Formula

When you find a duplicate, quantify it for leadership. Don't say "we have duplication." Say:

(Engineers on System B × Avg Salary) // direct build cost

+ (Incidents on System B × MTTR × Hourly Eng Cost) // maintenance

+ (Reconciliation Layer Engineers × Salary) // the bridge tax

+ (Onboarding Hours × 2 systems × New Hire Rate) // cognitive load

+ (Features NOT built × Estimated Revenue Impact) // opportunity cost

Worked example for TacoCorp:

But also quantify the fix: Consolidation cost = migration engineering (estimate 1-2 quarters, 2-3 engineers) + temporary velocity reduction during migration + risk of regression. For TacoCorp, that's roughly $270K-$400K, a 3-5 month payback period. That's the ROI your CFO needs.

AI-Powered Duplication Detection

You don't have to find duplicates manually. Modern AI tooling can scan your codebase for capability overlap:

The Leadership Pitch

Your VP doesn't care about bounded contexts (the DDD term for clear ownership boundaries around a business capability). They care about cost, velocity, and risk. Frame it as:

"We are paying [X engineers] to maintain two systems that do the same thing. The annual cost is [$Y]. Consolidation pays for itself in [Z] months."

"Every feature change requires updates to both systems. Our effective delivery speed is halved for this capability."

"The reconciliation layer is a single point of failure that was never designed, never tested, and has no on-call owner."

That's the sentence no one wants to say in a planning meeting. But it's the sentence that would have saved two quarters, a reconciliation layer, and a lot of double-delivered tacos.

The Root Problem

This wasn't about microservices. Not about events. Not about technology.

No enforced ownership of the capability.

Conway's Law isn't the constraint. It's the mirror. If your system is fragmented, it's not because teams aren't communicating. It's because no one owns the capability.

References & Influences

- How Do Committees Invent? by Melvin Conway (1967). The original paper.

- Microservices by Martin Fowler. Architecture patterns and trade-offs.

- Domain-Driven Design by Eric Evans. Bounded contexts, strategic design, ubiquitous language.

- Building Microservices by Sam Newman. Service boundaries and organizational alignment.

- Team Topologies by Matthew Skelton & Manuel Pais. Interaction modes and the Inverse Conway Maneuver.

- Inverse Conway Maneuver (ThoughtWorks Technology Radar).

- Spotify Engineering Culture by Henrik Kniberg. How autonomous squad ownership reduced capability duplication.

- Platform Engineering (Gartner). Why shared capability ownership through platforms reduces redundant integrations.