Govern or Get Replaced: Why AI-Native Startups Are Positioned to Eat Your Platform Alive

The business case for AI-augmented architecture governance, with real industry research from 49 sources, architecture designs, and a self-assessment you can run on your own org.

In a world where AI-augmented development teams can prototype in weeks what used to take quarters, the question isn't whether disruption is coming. It's whether your architecture can absorb it. The teams that will move fastest aren't the biggest. They're the ones that know what they own.

They don't have your customer base. Not yet. But they have something you don't: a clean architecture, zero tech debt, and an AI-augmented development velocity you can't match while your teams are still arguing about who owns the fulfillment pipeline.

- The problem: Engineering fragmentation costs mid-to-large SaaS companies $35–60M/year across developer productivity, cloud waste, duplication, incidents, and compliance (categories overlap; realistic recovery is 15–30%).

- The solution: An AI-augmented governance platform that provides visibility, cost attribution, and duplicate detection, without the friction of traditional governance.

- The ROI: 230–800% over 3 years on a $2.8–4.5M investment. Baseline tier assumes realistic ramp-up; higher tiers require full organizational adoption.

- Start here: Take the 15-question self-assessment to score your org. Use the interactive ROI calculator with your own numbers.

- Build vs. buy is shifting: AI agents make lightweight custom governance viable. Open source + agents can replace expensive SaaS governance tools for many orgs. Start commercial if speed matters, but the calculus is tilting toward build.

- The future role: "AI Agent Director," managing swarms of agents, measured by output shipped. Engineers architect; agents build.

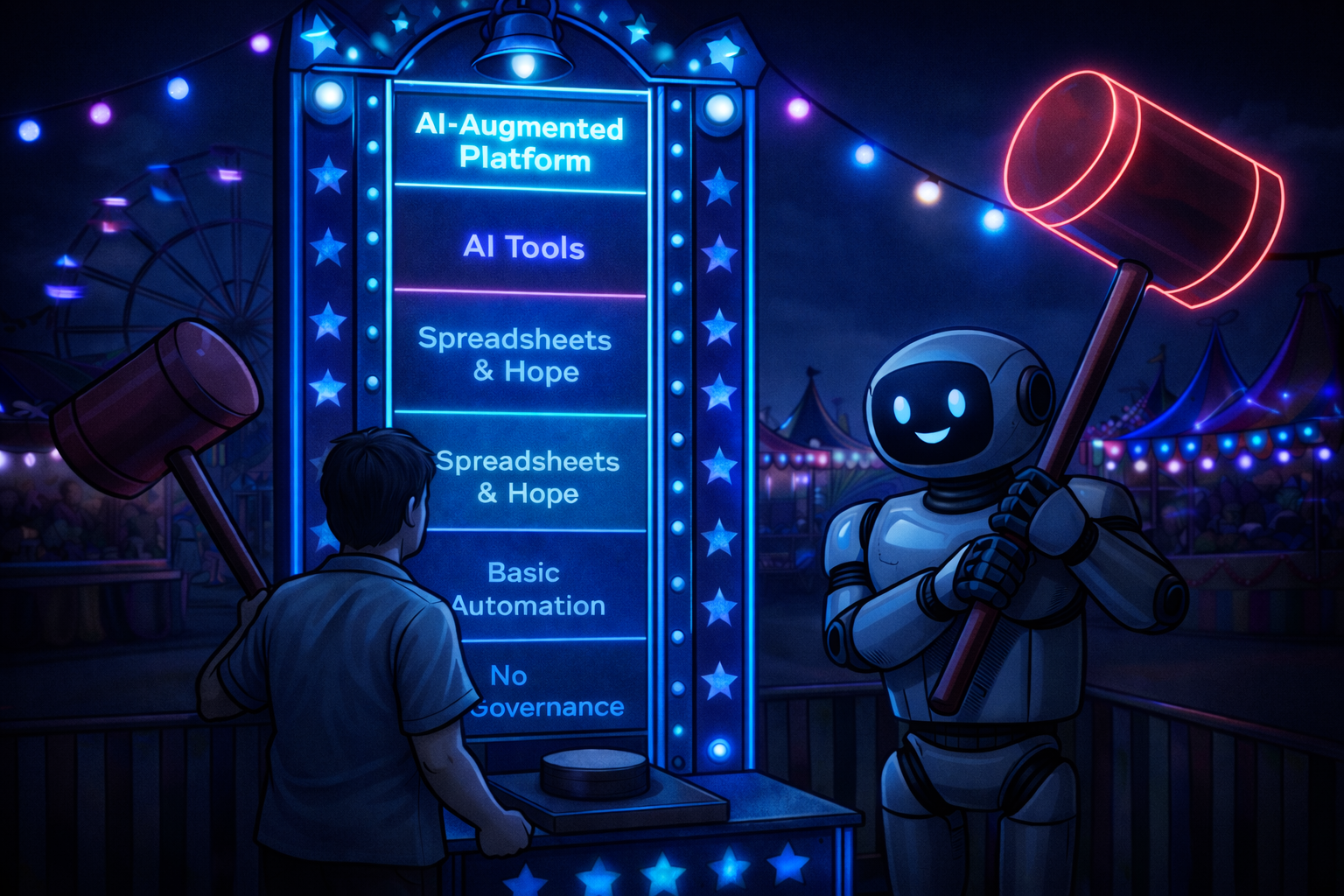

The Organizational Evolution, and Where Companies Get Stuck

Every engineering organization evolves through the same stages. The question is whether you adapt before entropy compounds.

Small team, everyone knows everything. No governance needed because everyone is in the same room. Ship daily.

Teams multiply. Conway's Law kicks in. Architecture mirrors org chart. Still fast, but tribal knowledge starts to strain.

Hundreds of engineers, thousands of pipelines. Nobody has the full picture. Decisions slow down. Information lives in spreadsheets and Slack threads.

Duplicate capabilities emerge. Orphaned infrastructure accumulates. Speed decreases while cost increases. Most mid-to-large SaaS companies are here, and many don't realize it.

Heavy-handed governance: mandatory forms, quarterly audits, approval committees. Teams route around the process. Speed decreases further. The cure is worse than the disease.

The Entropy Tax: What Fragmentation Actually Costs

The entropy tax is the cumulative cost an organization pays when nobody has a clear picture of what it owns, who owns it, and what it costs. It's not a line item. It's the invisible drag across developer productivity, cloud waste, duplicate work, incident response, talent attrition, and compliance labor.

Engineering fragmentation costs mid-to-large SaaS companies $35–60M/year across six categories: developer productivity ($10–23M), cloud waste ($6–14M), architecture duplication ($2–11M), incident response ($3–10M), talent attrition ($3–6M), and compliance ($4–10M). Before Spotify built Backstage, developers spent 60 minutes/day searching for the right service. After: >50% reduction.

| Category | Benchmark | Annual Cost |

|---|---|---|

| Developer Productivity | ||

| Context-gathering & searching | 4–7 hrs/week lost (Cortex 2024) | $6–12M |

| PR review routing to wrong people | 24–48hr avg wait. 2x cycle time if >24hr (LinearB) | $1–3M |

| Cross-team coordination overhead | 20–35% of knowledge worker time (HBR) | $3–8M |

| Cloud & Infrastructure Waste | ||

| Orphaned / unattributed resources | 15–22% waste in FinOps-mature orgs (Flexera 2024) | $5–11M |

| RI / savings plan underutilization | 30–40% of reservation spend wasted (CloudHealth) | $500K–1.5M |

| Tag non-compliance (no cost attribution) | Only 30% can attribute costs to teams (FinOps Foundation) | $500K–1M |

| Architecture & Duplication | ||

| Duplicate capability development | Based on Microsoft Research's finding that diffuse ownership doubles defect density, industry estimates place the cost of each Conway's Law violation at $500K–$2M/yr in duplicate maintenance | $1–8M |

| Change failure rate from blind deploys | 15–25% CFR (Change Failure Rate) for low performers (DORA (DevOps Research and Assessment)) | $500K–2M |

| API breaking changes without consumer visibility | 52% of devs say this is biggest pain (Postman 2023) | $300K–1M |

| Incidents & Reliability | ||

| Incident triage waste (routing) | 25–40% of response time (PagerDuty 2022) | $2–5M |

| Extended MTTR (Mean Time to Recovery) (no runbooks / deps) | $5,600/min downtime (Gartner, widely cited industry benchmark) | $500K–5M+ |

| Supply chain response (Log4j-type) | 72% still vulnerable at 12 months (Tenable) | $1–5M/event |

| People & Talent | ||

| Attrition from poor tooling / DX | 60% considered leaving over tooling (Atlassian/DX 2024) | $1.5–3M |

| Knowledge loss during attrition | 20–40% of departure cost is knowledge drain (SHRM) | $1–3M |

| Manager decision-making slowness | 37% of exec time on decisions, half ineffective (McKinsey 2019) | $500K–1.5M |

| Compliance & Legal | ||

| Audit prep labor (PCI / SOX / SOC2) | $1.5–3M/yr SOX alone (Protiviti 2023) | $2–4M |

| GDPR / DSAR (Data Subject Access Request) response | $1,400–1,600 per request (Gartner). 200–500+/month for enterprises. | $1–3M |

| License audit true-up exposure | 73% audited annually. Avg true-up $250K–3M (Flexera 2023) | $500K–2M |

| Vendor overspend (no usage visibility) | 25–30% software license overspend (Gartner) | $1–3M |

| Total Entropy Tax | $35–60M/year | |

Note: The $35–60M range represents the total addressable problem space, not all simultaneously recoverable. Some categories overlap significantly (e.g., context-gathering waste and cross-team coordination; cloud waste and tag non-compliance). A realistic recovery target is 15–30% of the total addressable cost. PCI-regulated environments may also show higher apparent waste due to intentional over-provisioning for isolation and DR.

Before Spotify built Backstage, developers spent 60 minutes per day searching for the right service, team, or documentation. After deploying their service catalog: >50% reduction. Same problem. Same scale.

Real-World Proof: Companies That Paid the Entropy Tax

The AI Inflection: Why This Is Now Existential

AI coding capability has evolved from autocomplete (2022) to autonomous engineering (2025) in three years. 46% of code in Copilot-enabled files is AI-generated (GitHub). 25%+ of Google's new code is AI-generated. SWE-bench: 50%+ of real GitHub issues solved autonomously. Each wave of disruption is faster: cloud-native took ~10 years, API-first took ~3-5, AI-native will take ~2-3 years.

AI coding capability has evolved from autocomplete to autonomous engineering in three years:

"The IT department of every company will become the HR department of AI agents." – Jensen Huang, CES 2025

Each wave of industry disruption is also faster:

AI acceleration makes one existing problem dramatically worse: when two teams unknowingly build the same capability, AI helps them build it faster, doubling the waste at double the speed. That's Conway's Law in the age of agents.

Conway's Law: The $500K Mistake Nobody Catches

Microsoft Research (2008) proved that organizational structure is the strongest predictor of software defect density, stronger than code complexity, test coverage, or churn.

Components with diffuse ownership had 2x the defect density.

Yet most companies have zero tooling to detect when two teams are unknowingly building the same capability.

flowchart TB subgraph ORG["Organization"] direction TB VP1["VP — Payments"] --> T1["Team Alpha"] VP2["VP — Operations"] --> T2["Team Beta"] end subgraph SYS["System Architecture"] direction TB CAP["Same Business Capability"] --> S1["Pipeline A (Java)"] CAP --> S2["Pipeline B (.NET)"] end T1 -. "builds" .-> S1 T2 -. "builds" .-> S2 style ORG fill:#0f0f1a,stroke:#a78bfa,color:#f5f5fa style SYS fill:#0f0f1a,stroke:#ef4444,color:#f5f5fa style VP1 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style VP2 fill:#1a1040,stroke:#a78bfa,color:#f5f5fa style T1 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style T2 fill:#2d1010,stroke:#ef4444,color:#f5f5fa style CAP fill:#1a1000,stroke:#fbbf24,color:#fbbf24 style S1 fill:#0c4a6e,stroke:#0ea5e9,color:#f5f5fa style S2 fill:#2d1010,stroke:#ef4444,color:#f5f5fa

Two VPs, two teams, two pipelines, same capability. Neither team knew about the other. Based on Microsoft Research's finding that diffuse ownership doubles defect density, industry estimates place the cost of each Conway's Law violation at $500K–$2M/year in duplicate maintenance.

A Day Without Governance vs. A Day With It

Without: The Spreadsheet Archaeology

Your CFO asks: "How much does virtual card processing cost us?" The question triggers a cascade. Engineering managers schedule meetings. A FinOps analyst starts a spreadsheet, cross-referencing cloud billing tags (30% of resources are untagged) with Jira project codes (which don't map cleanly to pipelines). Three weeks later, you have a range: "$280K–$560K per month, depending on how you allocate shared infrastructure." The CFO asks why the range is 2x. Nobody can explain it. A follow-up meeting is scheduled.

Meanwhile, a team in another division has already started building a second virtual card processing pipeline because they didn't know the first one existed.

With: The Platform Query

Same question. The FinOps analyst opens the governance platform and queries: "What is the monthly cost of virtual card processing?" The entity graph maps the capability to 12 pipelines across 3 teams, aggregates cloud spend from tagged resources, and returns: $420K/month, broken down by team, with a 6-month trend line showing a 15% increase since the new cache layer was added.

The platform also flags that two teams are implementing the same capability, with an estimated duplication cost: $600K/year. The architecture review board has the data to make a consolidation decision before the next sprint planning.

What AI-Augmented Governance Actually Looks Like

Not more meetings. Not another spreadsheet. It's a 5-tier architecture governance platform that uses AI to operate at scale:

Auto-sync CI/CD, cloud, HR, and project tools. Zero manual entry.

Governed relationship graph connecting business intent to technical reality.

Capability tagging, overlap detection, impact assessment, cost analysis.

Search, scorecards, AI chat, draft review, dashboards.

Deploy gates, cost reports, sunset recommendations, compliance evidence.

Not more meetings. Not another spreadsheet. Not a CMDB that fails to deliver intended value 80% of the time (Gartner, 2019; many deliver partial value but miss ROI targets). It's an architecture governance platform that uses AI to operate at scale.

Platform Architecture (5 Tiers)

Click any tier to see detailed capabilities, personas, and use cases.

Pipelines, builds, repos

Resources, costs, metrics

Teams, cost centers

Backlogs, roadmaps

Business areas

Who owns what

What we deliver

How we deploy

What we use

AI proposes, humans approve

Conway's Law violations

Blast radius in seconds

TCO per capability

Tech lifecycle compliance

"Who owns this?"

Domain maturity grades

NL queries over graph

Human-in-the-loop

Pipeline status dashboard

Change blast radius

Cost attribution views

Block ungoverned deploys

FinOps attribution

Data-driven decommission

Auto-generated docs

PCI/SOX audit evidence

CAPABILITIES

Ingest pipelines, builds, repos, deployment history from GitHub Actions, Azure DevOps, GitLab CI, Jenkins

Pull resource groups, compute costs, storage metrics from Azure, AWS, or GCP billing APIs

Map teams to cost centers, reporting structure, headcount from Workday, BambooHR, or HRIS (HR Information System)

Connect backlogs, roadmaps, initiative data from Jira, Aha!, Azure Boards, Linear

Import existing configuration items and incident data to enrich the entity graph

WHO USES THIS & WHY

KEY USE CASES

- Automated pipeline discovery, no manual catalog entry

- Cloud cost import for capability-level attribution

- Org chart alignment for ownership validation

CAPABILITIES

Business areas (Payments, Risk, Onboarding). Contains teams, capabilities, and value streams.

Engineering teams with members, ownership, technology portfolios, and capacity metrics.

Business capabilities mapped to pipelines with percentage allocation.

Services and APIs that implement capabilities. The bridge between intent and code.

CI/CD pipelines with build history, deploy frequency, and stage mappings.

Catalog with lifecycle stages: Emerging, Core, Contained, Not Allowed.

End-to-end flows spanning multiple domains.

Business sponsors linked to domains. Change proposals with impact analysis.

WHO USES THIS & WHY

KEY USE CASES

- Capability-to-team ownership mapping

- Technology lifecycle tracking across pipelines

- Domain boundary enforcement (Conway's Law)

- SOX-compliant audit trail for all changes

- Impact assessment in 30 seconds

RELATIONSHIPS (28 JOIN TABLES)

Conway's Law detection when boundaries drift.

Every pipeline has exactly one owning team.

Percentage allocation for shared pipelines.

Lifecycle compliance checks at deploy time.

Capacity analysis and key-person dependency detection.

GOVERNANCE INFRASTRUCTURE

Every change is a proposal. Batched, reviewed, committed atomically. Full audit trail.

Normalize environment names to 7 canonical stages. 804+ mappings.

Connects Architecture Decision Records to affected entities.

ARCHITECTURE ARTIFACTS ENABLED

Blast-radius: affected teams, capabilities, pipelines.

AI-generated ADRs grounded in entity data.

Structured docs with entity data pre-populated.

Auto-generated. L1: domain. L2: capability + ownership.

Cross-team coordination and handoff requirements.

Pipeline → capability → domain cost aggregation.

Deprecated tech with affected pipelines, effort, timeline.

PCI, PII, regulated systems, queryable in seconds.

CAPABILITIES

Scans repositories and proposes business capability tags. Teams review and approve. Months of work reduced to hours.

Identifies when multiple teams independently implement the same capability. Estimates duplication cost.

Queries the dependency graph for blast-radius analysis. 30 seconds, not 2 weeks of meetings.

Connects cloud spend to pipelines to capabilities. Answers "How much does X cost?" with computed numbers.

Checks technology lifecycle compliance. Flags "Not Allowed" technologies still in production.

Monitors cost spikes, build failure patterns, and governance score regressions.

WHO USES THIS & WHY

KEY USE CASES

- Overnight capability tagging of 4,000+ pipelines

- Automated Conway's Law violation detection with cost estimates

- Real-time impact assessment for deprecation decisions

- Cost spike root-cause analysis

CAPABILITIES

"Who owns Pipeline X?" Instant answers from the entity graph. Replaces Slack-hunting.

Per-domain governance maturity scores (A-F). Ownership %, tagging %, tech health %. Gamifies governance.

Natural language queries. "Show me teams in Payments using deprecated tech." Context-aware per page.

Shopping cart of proposed changes. Review, approve, reject, modify. Full diff view. Atomic commits.

Pipeline status across all environments. Color-coded dots. Filter by domain, team, value stream.

Visual blast-radius for proposed changes. Affected teams, pipelines, capabilities.

Cost attribution by capability, domain, team. Orphaned resource discovery. Chargeback reports.

Visual lifecycle view. Core vs Contained vs Not Allowed. Migration progress tracking.

Visual map of all capabilities with ownership, health, and cost. Identifies gaps and overlaps.

Organizations have thousands of unique stage names across pipelines (e.g., "ABC Deploy", "QA-Staging", "prod-release"). The stage mapper normalizes these to 7 canonical environments (DEV, CI, QA, STAGE, PROD, etc.) and stage types (Build, Test, Deploy, Destroy). Per-pipeline overrides let teams customize: if "ABC Deploy" is QA for Team Alpha but DEV for everyone else, the override handles it. The Build Wall depends on accurate stage mappings to show pipeline health by environment.

Detects when deployments skip expected stages or environments. If a build reaches PROD without passing through QA or STAGE, the system flags it. Catches artifact mismatches across environments (different build in DEV vs what deployed to PROD). Configurable per-pipeline; some pipelines legitimately skip stages, others must follow the full progression. Alerts go to the owning team and release manager.

Engineers submit feature requests and bug reports directly through the AI Chat. The chat structures requests into a consistent format (category, severity, affected entity, steps to reproduce) and adds them to a prioritized backlog. Engineering leads review suggestions to decide what to implement. Keeps feedback in the platform where the context lives, not scattered across Slack, Jira, and email.

WHO USES THIS & WHY

KEY USE CASES

- Developer finds service owner in 30 seconds (was 47 min)

- Domain lead reviews governance score weekly

- PM checks impact of proposed deprecation

- FinOps identifies $180K/mo in orphaned resources

- Architect detects capability overlap between teams

- CISO runs "show me all PCI-scoped systems" query

- Platform eng maps 200 unique stage names to 7 canonical environments

- Team overrides "ABC Deploy" from DEV to QA for their pipelines only

- Alert: build deployed to PROD without passing QA or STAGE

- Engineer submits feature request through AI chat with structured context

- Eng lead reviews prioritized feedback backlog from in-app submissions

CAPABILITIES

Pre-deploy API check. Pipeline must have ownership, capability tags, tech compliance. Blocks ungoverned deploys.

Monthly capability-level cost reports. FinOps attribution for chargeback.

Data-driven decommission proposals. High cost + low activity + alternative = sunset candidate.

Auto-generated ADRs from governance decisions. Full decision chain with cost impact.

PCI/SOX audit evidence from the entity graph. Full ownership chain in seconds.

Pre-release risk assessments. Capabilities affected, teams involved, historical stability.

WHO USES THIS & WHY

KEY USE CASES

- CI/CD pipeline blocked from deploying untagged service

- CFO gets monthly capability cost breakdown

- Auditor receives PCI evidence package in minutes

- Architecture board reviews data-driven sunset proposal

- Release manager sees pre-deploy risk summary

Click any tier to explore capabilities, personas, and use cases. Data flows top-to-bottom through the entity graph.

Use Cases: How It Works in Practice

Click through to see specific workflows. Each one shows the process flow and the business value it delivers.

Strategic Benefits Beyond Cost Savings

Beyond the core entropy tax, a governed capability map unlocks strategic value that's hard to quantify until you need it.

M&A Due Diligence

"Show me your full tech portfolio" takes weeks without a governance platform. With one, it's an API call. Accelerates deal timelines and reduces integration risk.

Compliance & Audit

"Show me every system with cardholder data," answered in seconds, not weeks. PCI fines: $5K–$100K/month. Avg breach: $4.45M (IBM, 2023). Also enables GDPR data residency mapping and cross-border compliance queries. The governance platform itself should be deployed within your compliance boundary.

Talent Retention

60% of devs consider leaving over poor tooling. Platform eng adopters see 25–30% less attrition (Gartner).

Addressing Common Concerns

Phased Adoption Roadmap

Convinced? Here's what to do Monday. A governance platform is a product, not a project. Adopt incrementally with measurable milestones at each phase.

Score Your Organization

How vulnerable is your org to the entropy spiral? Answer honestly.

Capability Matrix: What Each Option Covers

Not all tools cover the same ground. This matrix shows what you get out of the box, what requires customization, and where gaps remain.

| Capability | Backstage OSS / CNCF | Cortex Commercial | OpsLevel Commercial | Port Commercial | Custom Platform Build your own |

|---|---|---|---|---|---|

| Cost & Positioning | |||||

| Annual cost | Free + 2–3 FTE | $50–150K | $50–150K | $40–120K | $2.8–3.9M / 3yr |

| Gartner positioning | CNCF Incubating | Leader (IDP) | Leader (IDP) | Visionary (IDP) | Custom build |

| Service Catalog & Ownership | |||||

| Service registry | Yes | Yes | Yes | Yes | Yes |

| Team ownership mapping | Yes | Yes | Yes | Yes | Yes |

| Health scorecards | Plugin | Yes | Yes | Yes | Yes |

| Technology lifecycle tracking | Plugin | Yes | Yes | Partial | Yes |

| Where Commercial Tools Excel (Custom Gaps) | |||||

| Managed SaaS (no infra to run) | No (self-host) | Yes | Yes | Yes | No (self-host) |

| Pre-built integrations (50+) | Plugin ecosystem | Yes | Yes | Yes | Build each |

| Incident correlation (PagerDuty/OpsGenie) | Plugin | Yes | Yes | Partial | Not yet |

| On-call schedule visibility | Plugin | Yes | Yes | Partial | Not yet |

| Self-service actions (create repo, deploy) | Plugin | Yes | Partial | Yes | Not yet |

| Governance & Change Management | |||||

| Draft / review system (propose → approve) | No | No | No | No | Yes |

| Stage mapping (normalize environments) | No | No | No | No | Yes |

| Environment progression alerts | No | Partial | No | No | Via API |

| Deploy gates (block ungoverned deploys) | Plugin | Yes | Yes | Partial | Via API |

| Business Capability Intelligence | |||||

| Business capability mapping | No | No | No | Partial (Blueprints can model capabilities) | Yes |

| Capability cost attribution (FinOps) | No | No | No | No | Yes |

| Duplicate capability detection | No | No | No | No | Yes |

| Blast-radius impact assessment | No | Partial | Partial | Partial | Yes |

| Sunset recommendations (data-driven) | No | No | No | No | Yes |

| AI & Architecture | |||||

| AI capability tagging | No | Emerging (Cortex AI) | No | No | Yes |

| AI chat over entity graph | No | Emerging (Cortex AI) | No | No | Yes |

| Data foundation for ADRs / L1-L2 diagrams | No | No | No | No | Enables* |

| Speed of Evolution | |||||

| AI-assisted feature development | No | No | No | No | Yes |

| User-submitted ideas → auto-research → spec → build | No | No | No | No | Yes |

| New reporting / analytics without vendor roadmap | Plugin (slow) | Vendor roadmap | Vendor roadmap | API only | Same day |

| Connect to any internal tool (custom MCP/API) | Plugin | Limited | Limited | API | Yes |

Green = built-in. Yellow = plugin or partial. Blue = plugin ecosystem. Gray = not available.

Note: The IDP market evolves rapidly. These assessments reflect capabilities as of early 2026. Verify current feature sets before making decisions.

The Moat: What No Commercial Tool Offers

Identifies when multiple teams independently build the same capability. Estimates cost. Routes to architecture review.

Cloud spend → pipelines → business capabilities. Answers "how much does payment processing cost us?"

AI proposes tags, detects overlaps, generates impact assessments. Humans approve via draft system.

Every metadata change is a proposal. Batched, reviewed, committed atomically. SOX-grade audit trail.

Maps "what" (capabilities) to "how" (pipelines, teams, tech). Only LeanIX does this, and it's not developer-facing.

Users submit ideas via chat → auto-research → spec generated. Platform evolves at the speed of ideas, not vendor roadmaps.

Build vs. Buy: Full IDP Landscape

The IDP market has exploded. Here's the full landscape, from OSS frameworks to enterprise architecture tools:

| Tool | Type | Cost | Strengths | Key Gap |

|---|---|---|---|---|

| Internal Developer Portals | ||||

| Cortex | Commercial IDP | $50–150K/yr | Scorecards, maturity dashboards, initiative tracking | No cost attribution, no duplicate capability detection |

| OpsLevel | Commercial IDP | $50–150K/yr | Ownership, maturity rubrics, Terraform-aware | No FinOps, no capability mapping |

| Port | Commercial IDP | Free tier + paid | Flexible blueprints, self-service actions, RBAC | No architecture intelligence, no AI |

| Rely.io | Commercial IDP | Commercial | Auto-discovery, DORA metrics, fast setup | No FinOps, newer/smaller ecosystem |

| Compass | Atlassian bundle | Atlassian pricing | Jira/Confluence integration, scorecards | Ecosystem lock-in, shallow governance |

| Roadie | Managed Backstage | Commercial | No infra burden, added scorecards | Inherits Backstage gaps |

| Backstage | OSS framework | Free + 2–3 FTE | Plugin ecosystem, CNCF, full control | You build everything yourself |

| Enterprise Architecture & Governance | ||||

| LeanIX (SAP) | Enterprise EA | Enterprise | Capability mapping, tech risk, transformation planning | Not developer-facing, no AI governance |

| Ardoq | Enterprise EA | Enterprise | Dynamic modeling, dependency mapping, impact analysis | Not developer-facing, no FinOps |

| FinOps & Cost Governance | ||||

| Apptio/IBM | FinOps | Enterprise | IT financial management, TBM, cost allocation | No service catalog, no dev portal |

| Configure8 | IDP + Cost | Commercial | Service catalog WITH cost attribution baked in | Smaller ecosystem, no architecture governance |

| The Custom Alternative | ||||

| Custom platform | Build | $2.8–3.9M / 3yr | AI governance, duplicate detection, capability cost, draft system | You build + maintain it |

- Your org has fewer than 200 engineers, Backstage or Port will suffice

- You don't have a dedicated platform team to maintain it long-term

- Your compliance requirements are standard (SOC2/PCI without exotic needs)

- You're not running multi-cloud or multi-region infrastructure

- A managed IDP (Cortex, OpsLevel) covers 80%+ of your governance needs

- Spotify built Backstage as an open-source IDP rather than a custom governance platform. Their engineering org (~2,000+ engineers) found that service catalog + ownership mapping covered the majority of their governance needs. They open-sourced the result, now used by 150+ organizations.

- Netflix built Consoleme, Zuul, and other purpose-built tools rather than a unified governance platform. Their philosophy: invest in narrow, high-ROI internal tools and open-source them individually.

- Zalando adopted commercial IDP tooling alongside their own open-source contributions (e.g., ZMON). For a fast-scaling European e-commerce org, pre-built integrations and speed of deployment mattered more than full customization.

The ROI

Before & After: What Actually Changes

| Metric | Before Governance | After Governance | Impact |

|---|---|---|---|

| "Who owns this service?" | 47 min avg (Slack-hunting) | 30 seconds (platform query) | 94% faster |

| Incident triage routing | 25–40% of response time wasted | Direct page to owning team | $2–5M/yr saved |

| "How much does capability X cost?" | 3-week investigation, 2x range | 30-second query, exact number | Decision-ready data |

| Duplicate capability detection | Discovered post-mortem (if ever) | Flagged at ideation with cost estimate | $500K–$2M/yr per violation |

| Cloud orphan discovery | Manual spreadsheet audit | Automated scan, decommission list | $1.5–5.5M/yr recovered |

| Audit prep (PCI/SOX) | Weeks of cross-team evidence gathering | Minutes (entity graph query) | 80%+ labor reduction |

| New engineer onboarding | Weeks to find owners, understand topology | Day 1 (searchable platform) | Productive 2 weeks sooner |

| Technology lifecycle compliance | Discovered during incidents | Deploy gates block at CI/CD | Proactive, not reactive |

Interactive ROI Calculator

Adjust the sliders to match your organization. All savings recompute live. Click any value row to see the formula.

Value Breakdown by Category

Each row recalculates from sliders above. Click any row to see the formula.

| Value Category | Who Benefits | What Changes | Annual Value |

|---|---|---|---|

| Cost Savings: Direct Budget Reduction | |||

| Cloud waste recovery | FinOps, CFO | Orphaned resources decommissioned | $1.6M |

| Duplicate capability prevention | Architects, VP Eng | Conway's Law violations caught before building | $1.0M |

| Governance meeting reduction | Team leads, managers | Scorecards replace status meetings | $1.0M |

| Velocity: Engineers Ship Faster | |||

| Developer context-gathering recovery | All engineers | Search time drops from 5 hrs/week to 2 | $2.0M |

| Impact assessment acceleration | PMs, architects | 2-week meetings → 30-second queries | $0.4M |

| Faster engineer onboarding | New hires | Productive 2 weeks sooner | $0.9M |

| Incident cost reduction (P1+P2) | On-call, SRE | Triage + downstream + SLA costs | $0.5M |

| Risk Reduction: Avoided Costs & Liability | |||

| Compliance audit acceleration | Compliance, auditors | PCI/SOX evidence in minutes | $0.5M |

| Security surface reduction * | CISO, security | Deprecated tech flagged at deploy | $0.1M |

| Talent retention improvement | HR, eng managers | Fewer departures from better DX | $1.5M |

| Staff Transition: People Freed for Higher-Value Work | |||

| Governance coordinators → platform | 2–3 FTEs | Transition to higher-value work | $0.5M |

| Consulting spend reduction | Arch consulting budget | Platform replaces advisory engagements | $0.2M |

| TOTAL ANNUAL VALUE | $8.7M | ||

* Security surface reduction represents operational savings only (monitoring, patching, compliance exceptions for deprecated tech). It excludes risk-adjusted breach avoidance. The average financial services breach costs $6.08M (IBM 2024). Even a small reduction in breach probability significantly exceeds this floor estimate.

3-Year Summary

Even the baseline scenario shows strong ROI. These tiers represent different levels of organizational adoption, not optimism. Baseline assumes a realistic ramp-up period; Efficiency Gains reflect tool maturity over time; Full Maturity assumes complete adoption and network effects across teams.

Aligned with Forrester TEI (Total Economic Impact) benchmarks (195–300% ROI for CMDB investments; note: TEI studies are vendor-commissioned by ServiceNow). The platform intelligence layer exceeds CMDB ROI because it includes business capability mapping, not just infrastructure CIs.

Investment Cost Breakdown

The benefits above are detailed with 19 adjustable sliders. The investment side deserves equal transparency. Here's a typical cost structure for a 3-year custom governance platform build:

Note: AI-assisted development (Claude Code, Cursor, Devin) reduces the effective cost by 40–60% compared to traditional development. Platform engineers spend 50%+ of their time directing AI agents rather than writing code from scratch, compressing timelines and reducing headcount requirements.

| Cost Category | Year 1 | Year 2 | Year 3 | 3-Year Total |

|---|---|---|---|---|

| People (Primary Cost Driver) | ||||

| Platform engineers (2–4 FTE) | $360–720K | $360–720K | $360–720K | $1.1–2.2M |

| Product/UX (0.5–1 FTE) | $90–180K | $90–180K | $90–180K | $270–540K |

| Part-time: architect, FinOps, security | $60–120K | $60–120K | $60–120K | $180–360K |

| Infrastructure & Tooling | ||||

| Cloud hosting (compute, DB, storage) | $50–100K | $60–120K | $70–150K | $180–370K |

| AI/LLM API costs (tagging, analysis) | $20–40K | $30–60K | $40–80K | $90–180K |

| Dev tooling, CI/CD, monitoring | $10–20K | $10–20K | $10–20K | $30–60K |

| Opportunity Cost | ||||

| Engineers not building product features | Mitigated by AI-assisted development. Platform engineers spend 50%+ time directing AI agents, not writing code from scratch. | Variable | ||

| Total 3-Year Investment | $1.9–3.7M | |||

Ranges reflect org size and location. Fully loaded cost at $180K/yr per engineer. Commercial IDP alternative: $50–150K/yr for catalog + scorecards, but excludes the AI governance layer, capability cost attribution, and duplicate detection that drive the unique ROI.

The Choice

Path A: Govern

Build the capability map. Invest in AI-augmented governance. Ship faster because teams build instead of search. Spend less because orphans get found. Make better decisions because impact assessments take seconds.

Path B: Don't

Keep the spreadsheets. Keep the tribal knowledge. Hope nobody notices the $35–60M entropy tax. Hope that a small AI-augmented team doesn't replicate your core value while you're still searching for who owns the fulfillment pipeline.

But this isn't just about survival. It's about what your organization becomes with governance. Your engineers build instead of search. Your architects design instead of audit. Your CFO knows what capabilities cost, not just what teams cost. Your on-call engineers find owners in seconds, not Slack threads. Your new hires are productive from week one. That's the real ROI: not just money saved, but potential unlocked.

Tools & Repositories to Get Started

Tools & Repositories

| Tool | Category | What It Does | Link |

|---|---|---|---|

| Service Catalogs & Developer Portals | |||

| Backstage | IDP (OSS) | Service catalog, TechDocs, scaffolding, plugin ecosystem. CNCF Incubating. | 29K+ stars |

| Roadie | Managed Backstage | Hosted Backstage with added scorecards (Tech Insights). No infra burden. | roadie.io |

| Port | IDP | Flexible blueprints, self-service actions, scorecards. Free tier available. | getport.io |

| Compass | Atlassian IDP | Component catalog integrated with Jira/Confluence/Bitbucket. | atlassian.com |

| FinOps & Cloud Cost | |||

| OpenCost | K8s Cost (OSS) | Kubernetes cost monitoring and allocation. CNCF Sandbox. | 5K+ stars |

| Infracost | IaC Cost | Cloud cost estimates for Terraform before you deploy. Shift-left FinOps. | 11K+ stars |

| Kubecost | K8s Cost | Real-time cost allocation per namespace, service, team. K8s-native. | kubecost.com |

| Vantage | Multi-cloud Cost | Cloud cost dashboards, per-service reporting, Kubernetes cost. Free tier. | vantage.sh |

| Metadata & Data Governance | |||

| DataHub | Metadata (OSS) | Metadata platform with lineage, discovery, governance. LinkedIn origin. | 10K+ stars |

| OpenMetadata | Metadata (OSS) | Unified metadata platform with lineage, quality, and glossary. | 5K+ stars |

| Amundsen | Discovery (OSS) | Data discovery and metadata engine. Lyft origin. | 4K+ stars |

| Security & Compliance | |||

| Dependency-Track | SCA (OSS) | Component analysis, SBOM management, vulnerability tracking. OWASP. | 2.5K+ stars |

| Trivy | Scanner (OSS) | Container, filesystem, IaC vulnerability scanning. Aqua Security. | 24K+ stars |

| Grype | Scanner (OSS) | Vulnerability scanner for container images and filesystems. Anchore. | 9K+ stars |

| Architecture & Documentation | |||

| MADR | ADR Templates | Markdown Architecture Decision Records. Lightweight governance. | 4K+ stars |

| Structurizr | C4 Diagrams | Architecture diagrams as code using the C4 model. Simon Brown. | structurizr.com |

| Mermaid | Diagrams (OSS) | Markdown-based diagrams (flowchart, sequence, ER, Gantt). Used in this page. | 75K+ stars |

| AI & Automation | |||

| GPT-Researcher | Research Agent | Autonomous deep research agent. Produces reports from 20+ sources. | 26K+ stars |

| Dify | LLM Platform | LLM app development platform. Build custom AI workflows. | 50K+ stars |

| LangGraph | Agent Framework | Graph-based agent orchestration. State machines for AI workflows. | 8K+ stars |

| Standards & Frameworks | |||

| FinOps Foundation | Framework | Cloud cost optimization framework, maturity model, community. | finops.org |

| DORA Metrics | Framework | Four key DevOps performance metrics. Google Cloud research. | dora.dev |

| Team Topologies | Framework | Team interaction patterns for fast flow. Skelton & Pais. | teamtopologies.com |

| SPACE Framework | Framework | Developer productivity dimensions (Microsoft/GitHub/UVic). | ACM Queue |

Related Case Studies

This case study covered the business case for AI-augmented governance. The related posts below explore specific aspects in depth, from Conway's Law violations in practice to a working prototype of the governance platform itself.

References

Developer Productivity & DX

Cloud Waste & FinOps

DevOps Performance & Reliability

Conway's Law & Organizational Architecture

Compliance & Audit

CMDB & Platform Engineering

AI Disruption & Competitive Intelligence

Real-World Case Studies

Tools & Platforms

Industry Analyst Reports

Expert Commentary & Keynotes

Video Overview

AI Debate

Prefer audio? Two AI-generated perspectives debate the core thesis of this case study.

Ending the $60 Million Entropy Tax

Can AI-augmented governance actually solve the fragmentation problem, or is it just another layer destined to fail?

Backed by 49 industry sources including Gartner, Forrester, McKinsey, DORA, and Microsoft Research. All citations linked inline and indexed in the References section above. Last updated April 2026.